Before

When using the Cloudflare Vite plugin to build and deploy Workers, a Wrangler configuration file is now optional for assets-only (static) sites. If no wrangler.toml, wrangler.json, or wrangler.jsonc file is found, the plugin generates sensible defaults for an assets-only site. The name is based on the package.json or the project directory name, and the compatibility_date uses the latest date supported by your installed Miniflare version.

This allows easier setup for static sites using Vite. Note that SPAs will still need to set assets.not_found_handling to single-page-application ↗ in order to function correctly.

The Cloudflare Vite plugin now supports programmatic configuration of Workers without a Wrangler configuration file. You can use the config option to define Worker settings directly in your Vite configuration, or to modify existing configuration loaded from a Wrangler config file. This is particularly useful when integrating with other build tools or frameworks, as it allows them to control Worker configuration without needing users to manage a separate config file.

The Vite plugin's new config option accepts either a partial configuration object or a function that receives the current configuration and returns overrides. This option is applied after any config file is loaded, allowing the plugin to override specific values or define Worker configuration entirely in code.

Setting config to an object to provide configuration values that merge with defaults and config file settings:

import { defineConfig } from "vite";import { cloudflare } from "@cloudflare/vite-plugin";

export default defineConfig({ plugins: [ cloudflare({ config: { name: "my-worker", compatibility_flags: ["nodejs_compat"], send_email: [ { name: "EMAIL", }, ], }, }), ],});Use a function to modify the existing configuration:

import { defineConfig } from "vite";import { cloudflare } from "@cloudflare/vite-plugin";export default defineConfig({ plugins: [ cloudflare({ config: (userConfig) => { delete userConfig.compatibility_flags; }, }), ],});Return an object with values to merge:

import { defineConfig } from "vite";import { cloudflare } from "@cloudflare/vite-plugin";

export default defineConfig({ plugins: [ cloudflare({ config: (userConfig) => { if (!userConfig.compatibility_flags.includes("no_nodejs_compat")) { return { compatibility_flags: ["nodejs_compat"] }; } }, }), ],});Auxiliary Workers also support the config option, enabling multi-Worker architectures without config files.

Define auxiliary Workers without config files using config inside the auxiliaryWorkers array:

import { defineConfig } from "vite";import { cloudflare } from "@cloudflare/vite-plugin";

export default defineConfig({ plugins: [ cloudflare({ config: { name: "entry-worker", main: "./src/entry.ts", services: [{ binding: "API", service: "api-worker" }], }, auxiliaryWorkers: [ { config: { name: "api-worker", main: "./src/api.ts", }, }, ], }), ],});For more details and examples, see Programmatic configuration.

Earlier this year, we announced the launch of the new Terraform v5 Provider. We are aware of the high number of issues reported by the Cloudflare community related to the v5 release. We have committed to releasing improvements on a 2-3 week cadence ↗ to ensure its stability and reliability, including the v5.14 release. We have also pivoted from an issue-to-issue approach to a resource-per-resource approach ↗ - we will be focusing on specific resources to not only stabilize the resource but also ensure it is migration-friendly for those migrating from v4 to v5.

Thank you for continuing to raise issues. They make our provider stronger and help us build products that reflect your needs.

This release includes bug fixes, the stabilization of even more popular resources, and more.

Resource affected: api_shield_discovery_operation

Cloudflare continuously discovers and updates API endpoints and web assets of your web applications. To improve the maintainability of these dynamic resources, we are working on reducing the need to actively engage with discovered operations.

The corresponding public API endpoint of discovered operations ↗ is not affected and will continue to be supported.

dlp_custom_profilepartners_ent as valid enum for rate_plan.id (#6505 ↗)We suggest waiting to migrate to v5 while we work on stabilization. This helps with avoiding any blocking issues while the Terraform resources are actively being stabilized ↗. We will be releasing a new migration tool in March 2026 to help support v4 to v5 transitions for our most popular resources.

Cloudflare WAF now inspects request-payload size of up to 1 MB across all plans to enhance our detection capabilities for React RCE (CVE-2025-55182).

Key Findings

React payloads commonly have a default maximum size of 1 MB. Cloudflare WAF previously inspected up to 128 KB on Enterprise plans, with even lower limits on other plans.

Update: We later reinstated the maximum request-payload size the Cloudflare WAF inspects. Refer to Updating the WAF maximum payload values for details.

We are reinstating the maximum request-payload size the Cloudflare WAF inspects, with WAF on Enterprise zones inspecting up to 128 KB.

Key Findings

On December 5, 2025, we initially attempted to increase the maximum WAF payload limit to 1 MB across all plans. However, an automatic rollout for all customers proved impractical because the increase led to a surge in false positives for existing managed rules.

This issue was particularly notable within the Cloudflare Managed Ruleset and the Cloudflare OWASP Core Ruleset, impacting customer traffic.

Impact

Customers on paid plans can increase the limit to 1 MB for any of their zones by contacting Cloudflare Support. Free zones are already protected up to 1 MB and do not require any action.

You can now connect directly to remote databases and databases requiring TLS with wrangler dev.

This lets you run your Worker code locally while connecting to remote databases, without needing to use wrangler dev --remote.

The localConnectionString field and CLOUDFLARE_HYPERDRIVE_LOCAL_CONNECTION_STRING_<BINDING_NAME> environment variable can be used to configure the connection string used by wrangler dev.

{ "hyperdrive": [ { "binding": "HYPERDRIVE", "id": "your-hyperdrive-id", "localConnectionString": "postgres://user:password@remote-host.example.com:5432/database?sslmode=require" } ]}Learn more about local development with Hyperdrive.

Workers applications now use reusable Cloudflare Access policies to reduce duplication and simplify access management across multiple Workers.

Previously, enabling Cloudflare Access on a Worker created per-application policies, unique to each application. Now, we create reusable policies that can be shared across applications:

Preview URLs: All Workers preview URLs share a single "Cloudflare Workers Preview URLs" policy across your account. This policy is automatically created the first time you enable Access on any preview URL. By sharing a single policy across all preview URLs, you can configure access rules once and have them apply company-wide to all Workers which protect preview URLs. This makes it much easier to manage who can access preview environments without having to update individual policies for each Worker.

Production workers.dev URLs: When enabled, each Worker gets its own reusable policy (named <worker-name> - Production) by default. We recognize production services often have different access requirements and having individual policies here makes it easier to configure service-to-service authentication or protect internal dashboards or applications with specific user groups. Keeping these policies separate gives you the flexibility to configure exactly the right access rules for each production service. When you disable Access on a production Worker, the associated policy is automatically cleaned up if it's not being used by other applications.

This change reduces policy duplication, simplifies cross-company access management for preview environments, and provides the flexibility needed for production services. You can still customize access rules by editing the reusable policies in the Zero Trust dashboard.

To enable Cloudflare Access on your Worker:

workers.dev or Preview URLs, click Enable Cloudflare Access.For more information on configuring Cloudflare Access for Workers, refer to the Workers Access documentation.

We have updated the terminology “Reclassify” and “Reclassifications” to “Submit” and “Submissions” respectively. This update more accurately reflects the outcome of providing these items to Cloudflare.

Submissions are leveraged to tune future variants of campaigns. To respect data sanctity, providing a submission does not change the original disposition of the emails submitted.

This applies to all Email Security packages:

The WAF rule deployed yesterday to block unsafe deserialization-based RCE has been updated. The rule description now reads “React – RCE – CVE-2025-55182”, explicitly mapping to the recently disclosed React Server Components vulnerability. Detection logic remains unchanged.

Key Findings

Rule description updated to reference React – RCE – CVE-2025-55182 while retaining existing unsafe-deserialization detection.

Impact

Improved classification and traceability with no change to coverage against remote code execution attempts.

| Ruleset | Rule ID | Legacy Rule ID | Description | Previous Action | New Action | Comments |

|---|---|---|---|---|---|---|

| Cloudflare Managed Ruleset | N/A | React - RCE - CVE:CVE-2025-55182 | N/A | Block | Rule metadata description changed. Detection unchanged. | |

| Cloudflare Free Ruleset | N/A | React - RCE - CVE:CVE-2025-55182 | N/A | Block | Rule metadata description changed. Detection unchanged. |

This week's emergency release introduces a new rule to block a critical RCE vulnerability in widely-used web frameworks through unsafe deserialization patterns.

Key Findings

New WAF rule deployed for RCE Generic Framework to block malicious POST requests containing unsafe deserialization patterns. If successfully exploited, this vulnerability allows attackers with network access via HTTP to execute arbitrary code remotely.

Impact

| Ruleset | Rule ID | Legacy Rule ID | Description | Previous Action | New Action | Comments |

|---|---|---|---|---|---|---|

| Cloudflare Managed Ruleset | N/A | RCE Generic - Framework | N/A | Block | This is a new detection. | |

| Cloudflare Free Ruleset | N/A | RCE Generic - Framework | N/A | Block | This is a new detection. |

This week’s release introduces new detections for remote code execution attempts targeting Monsta FTP (CVE-2025-34299), alongside improvements to an existing XSS detection to enhance coverage.

Key Findings

Impact

If exploited, the vulnerability enables full remote command execution on the underlying server, allowing takeover of the hosting environment, unauthorized file access, and potential lateral movement. As the flaw can be triggered without authentication on exposed Monsta FTP instances, it represents a severe risk for publicly reachable deployments.

| Ruleset | Rule ID | Legacy Rule ID | Description | Previous Action | New Action | Comments |

|---|---|---|---|---|---|---|

| Cloudflare Managed Ruleset | N/A | Monsta FTP - Remote Code Execution - CVE:CVE-2025-34299 | Log | Block | This is a new detection | |

| Cloudflare Managed Ruleset | N/A | XSS - JS Context Escape - Beta | Log | Block | This rule is merged into the original rule "XSS - JS Context Escape" (ID: |

The latest release of @cloudflare/agents ↗ brings resumable streaming, significant MCP client improvements, and critical fixes for schedules and Durable Object lifecycle management.

AIChatAgent now supports resumable streaming, allowing clients to reconnect and continue receiving streamed responses without losing data. This is useful for:

Streams are maintained across page refreshes, broken connections, and syncing across open tabs and devices.

The MCPClientManager API has been redesigned for better clarity and control:

registerServer() method: Register MCP servers without immediately connectingconnectToServer() method: Establish connections to registered serversrestoreConnectionsFromStorage() now properly handles failed connections// Register a server to Agentconst { id } = await this.mcp.registerServer({ name: "my-server", url: "https://my-mcp-server.example.com",});

// Connect when readyawait this.mcp.connectToServer(id);

// Discover tools, prompts and resourcesawait this.mcp.discoverIfConnected(id);The SDK now includes a formalized MCPConnectionState enum with states: idle, connecting, authenticating, connected, discovering, and ready.

MCP discovery fetches the available tools, prompts, and resources from an MCP server so your agent knows what capabilities are available. The MCPClientConnection class now includes a dedicated discover() method with improved reliability:

this.schedule(new Date(), ...) would not fireTo update to the latest version:

npm i agents@latestYou can now review detailed audit logs for cache purge events, giving you visibility into what purge requests were sent, what they contained, and by whom. Audit your purge requests via the Dashboard or API for all purge methods:

The detailed audit payload is visible within the Cloudflare Dashboard (under Manage Account > Audit Logs) and via the API. Below is an example of the Audit Logs v2 payload structure:

{ "action": { "result": "success", "type": "create" }, "actor": { "id": "1234567890abcdef", "email": "user@example.com", "type": "user" }, "resource": { "product": "purge_cache", "request": { "files": [ "https://example.com/images/logo.png", "https://example.com/css/styles.css" ] } }, "zone": { "id": "023e105f4ecef8ad9ca31a8372d0c353", "name": "example.com" }}To get started, refer to the Audit Logs documentation.

We've partnered with Black Forest Labs (BFL) to bring their latest FLUX.2 [dev] model to Workers AI! This model excels in generating high-fidelity images with physical world grounding, multi-language support, and digital asset creation. You can also create specific super images with granular controls like JSON prompting.

Read the BFL blog ↗ to learn more about the model itself. Read our Cloudflare blog ↗ to see the model in action, or try it out yourself on our multi modal playground ↗.

Pricing documentation is available on the model page or pricing page. Note, we expect to drop pricing in the next few days after iterating on the model performance.

The model hosted on Workers AI is able to support up to 4 image inputs (512x512 per input image). Note, this image model is one of the most powerful in the catalog and is expected to be slower than the other image models we currently support. One catch to look out for is that this model takes multipart form data inputs, even if you just have a prompt.

With the REST API, the multipart form data input looks like this:

curl --request POST \ --url 'https://api.cloudflare.com/client/v4/accounts/{ACCOUNT}/ai/run/@cf/black-forest-labs/flux-2-dev' \ --header 'Authorization: Bearer {TOKEN}' \ --header 'Content-Type: multipart/form-data' \ --form 'prompt=a sunset at the alps' \ --form steps=25 --form width=1024 --form height=1024With the Workers AI binding, you can use it as such:

const form = new FormData();form.append('prompt', 'a sunset with a dog');form.append('width', '1024');form.append('height', '1024');

//this dummy request is temporary hack//we're pushing a change to address this soonconst formRequest = new Request('http://dummy', { method: 'POST', body: form});const formStream = formRequest.body;const formContentType = formRequest.headers.get('content-type') || 'multipart/form-data';

const resp = await env.AI.run("@cf/black-forest-labs/flux-2-dev", { multipart: { body: formStream, contentType: formContentType }});The parameters you can send to the model are detailed here:

prompt (string) - Text description of the image to generateOptional Parameters

input_image_0 (string) - Binary imageinput_image_1 (string) - Binary imageinput_image_2 (string) - Binary imageinput_image_3 (string) - Binary imagesteps (integer) - Number of inference steps. Higher values may improve quality but increase generation timeguidance (float) - Guidance scale for generation. Higher values follow the prompt more closelywidth (integer) - Width of the image, default 1024 Range: 256-1920height (integer) - Height of the image, default 768 Range: 256-1920seed (integer) - Seed for reproducibility## Multi-Reference Images

The FLUX.2 model is great at generating images based on reference images. You can use this feature to apply the style of one image to another, add a new character to an image, or iterate on past generate images. You would use it with the same multipart form data structure, with the input images in binary.

For the prompt, you can reference the images based on the index, like `take the subject of image 1 and style it like image 0` or even use natural language like `place the dog beside the woman`.

Note: you have to name the input parameter as `input_image_0`, `input_image_1`, `input_image_2` for it to work correctly. All input images must be smaller than 512x512.

```bashcurl --request POST \ --url 'https://api.cloudflare.com/client/v4/accounts/{ACCOUNT}/ai/run/@cf/black-forest-labs/flux-2-dev' \ --header 'Authorization: Bearer {TOKEN}' \ --header 'Content-Type: multipart/form-data' \ --form 'prompt=take the subject of image 1 and style it like image 0' \ --form input_image_0=@/Users/johndoe/Desktop/icedoutkeanu.png \ --form input_image_1=@/Users/johndoe/Desktop/me.png \ --form steps=25 --form width=1024 --form height=1024Through Workers AI Binding:

//helper function to convert ReadableStream to Blobasync function streamToBlob(stream: ReadableStream, contentType: string): Promise<Blob> { const reader = stream.getReader(); const chunks = [];

while (true) { const { done, value } = await reader.read(); if (done) break; chunks.push(value); }

return new Blob(chunks, { type: contentType });}

const image0 = await fetch("http://image-url");const image1 = await fetch("http://image-url");const form = new FormData();

const image_blob0 = await streamToBlob(image0.body, "image/png");const image_blob1 = await streamToBlob(image1.body, "image/png");form.append('input_image_0', image_blob0)form.append('input_image_1', image_blob1)form.append('prompt', 'take the subject of image 1and style it like image 0')

//this dummy request is temporary hack//we're pushing a change to address this soonconst formRequest = new Request('http://dummy', { method: 'POST', body: form});const formStream = formRequest.body;const formContentType = formRequest.headers.get('content-type') || 'multipart/form-data';

const resp = await env.AI.run("@cf/black-forest-labs/flux-2-dev", { multipart: { body: form, contentType: "multipart/form-data" }})The model supports prompting in JSON to get more granular control over images. You would pass the JSON as the value of the 'prompt' field in the multipart form data. See the JSON schema below on the base parameters you can pass to the model.

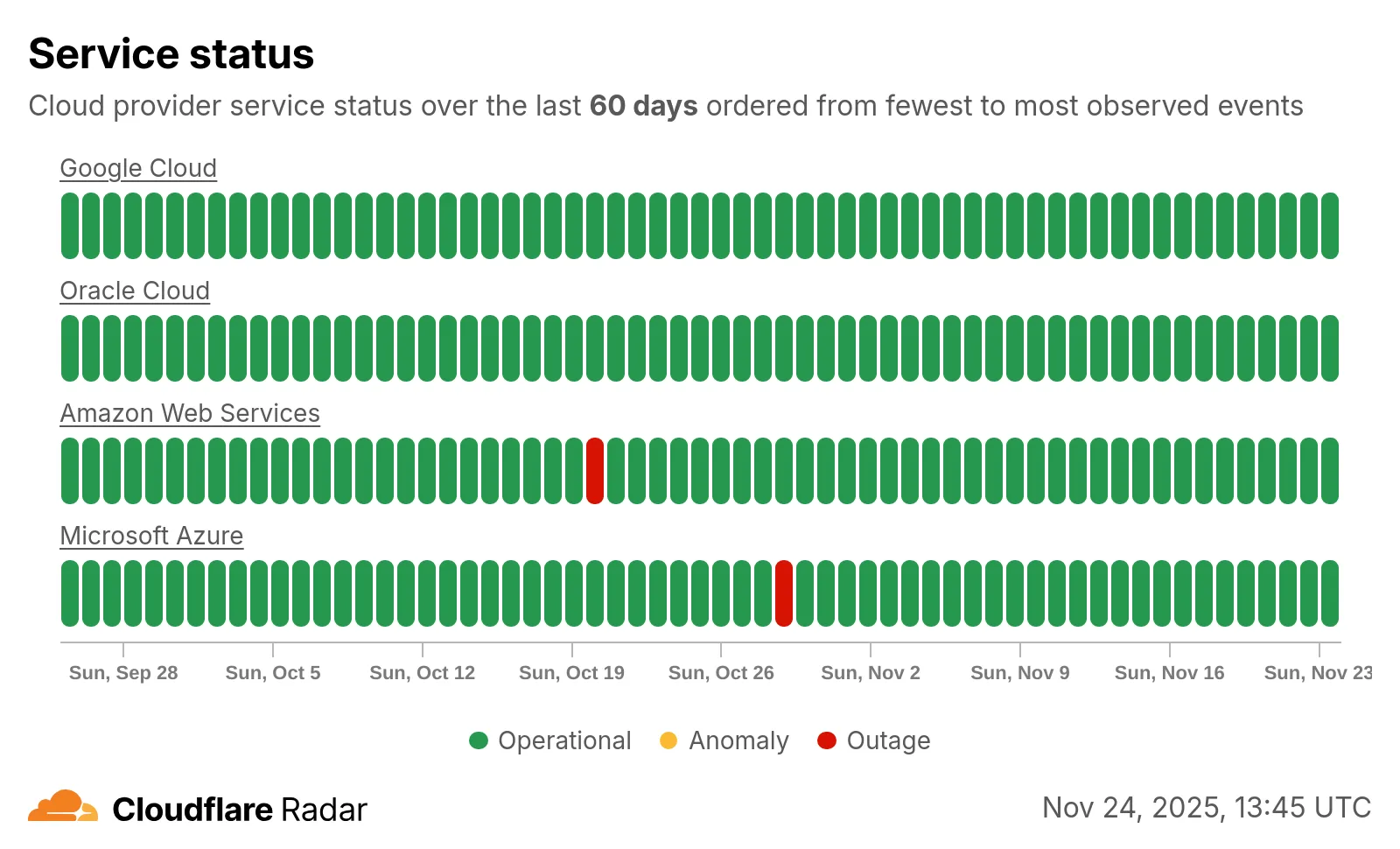

{ "type": "object", "properties": { "scene": { "type": "string", "description": "Overall scene setting or location" }, "subjects": { "type": "array", "items": { "type": "object", "properties": { "type": { "type": "string", "description": "Type of subject (e.g., desert nomad, blacksmith, DJ, falcon)" }, "description": { "type": "string", "description": "Physical attributes, clothing, accessories" }, "pose": { "type": "string", "description": "Action or stance" }, "position": { "type": "string", "enum": ["foreground", "midground", "background"], "description": "Depth placement in scene" } }, "required": ["type", "description", "pose", "position"] } }, "style": { "type": "string", "description": "Artistic rendering style (e.g., digital painting, photorealistic, pixel art, noir sci-fi, lifestyle photo, wabi-sabi photo)" }, "color_palette": { "type": "array", "items": { "type": "string" }, "minItems": 3, "maxItems": 3, "description": "Exactly 3 main colors for the scene (e.g., ['navy', 'neon yellow', 'magenta'])" }, "lighting": { "type": "string", "description": "Lighting condition and direction (e.g., fog-filtered sun, moonlight with star glints, dappled sunlight)" }, "mood": { "type": "string", "description": "Emotional atmosphere (e.g., harsh and determined, playful and modern, peaceful and dreamy)" }, "background": { "type": "string", "description": "Background environment details" }, "composition": { "type": "string", "enum": [ "rule of thirds", "circular arrangement", "framed by foreground", "minimalist negative space", "S-curve", "vanishing point center", "dynamic off-center", "leading leads", "golden spiral", "diagonal energy", "strong verticals", "triangular arrangement" ], "description": "Compositional technique" }, "camera": { "type": "object", "properties": { "angle": { "type": "string", "enum": ["eye level", "low angle", "slightly low", "bird's-eye", "worm's-eye", "over-the-shoulder", "isometric"], "description": "Camera perspective" }, "distance": { "type": "string", "enum": ["close-up", "medium close-up", "medium shot", "medium wide", "wide shot", "extreme wide"], "description": "Framing distance" }, "focus": { "type": "string", "enum": ["deep focus", "macro focus", "selective focus", "sharp on subject", "soft background"], "description": "Focus type" }, "lens": { "type": "string", "enum": ["14mm", "24mm", "35mm", "50mm", "70mm", "85mm"], "description": "Focal length (wide to telephoto)" }, "f-number": { "type": "string", "description": "Aperture (e.g., f/2.8, the smaller the number the more blurry the background)" }, "ISO": { "type": "number", "description": "Light sensitivity value (comfortable range between 100 & 6400, lower = less sensitivity)" } } }, "effects": { "type": "array", "items": { "type": "string" }, "description": "Post-processing effects (e.g., 'lens flare small', 'subtle film grain', 'soft bloom', 'god rays', 'chromatic aberration mild')" } }, "required": ["scene", "subjects"]}#2ECC71Radar introduces HTTP Origins insights, providing visibility into the status of traffic between Cloudflare's global network and cloud-based origin infrastructure.

The new Origins API provides provides the following endpoints:

/origins - Lists all origins (cloud providers and associated regions)./origins/{origin} - Retrieves information about a specific origin (cloud provider)./origins/timeseries - Retrieves normalized time series data for a specific origin, including the following metrics:

REQUESTS: Number of requestsCONNECTION_FAILURES: Number of connection failuresRESPONSE_HEADER_RECEIVE_DURATION: Duration of the response header receiveTCP_HANDSHAKE_DURATION: Duration of the TCP handshakeTCP_RTT: TCP round trip timeTLS_HANDSHAKE_DURATION: Duration of the TLS handshake/origins/summary - Retrieves HTTP requests to origins summarized by a dimension./origins/timeseries_groups - Retrieves timeseries data for HTTP requests to origins grouped by a dimension.The following dimensions are available for the summary and timeseries_groups endpoints:

region: Origin regionsuccess_rate: Success rate of requests (2XX versus 5XX response codes)percentile: Percentiles of metrics listed aboveAdditionally, the Annotations and Traffic Anomalies APIs have been extended to support origin outages and anomalies, enabling automated detection and alerting for origin infrastructure issues.

Check out the new Radar page ↗.

This week highlights enhancements to detection signatures improving coverage for vulnerabilities in FortiWeb, linked to CVE-2025-64446, alongside new detection logic expanding protection against PHP Wrapper Injection techniques.

Key Findings

This vulnerability enables an unauthenticated attacker to bypass access controls by abusing the CGIINFO header. The latest update strengthens detection logic to ensure a reliable identification of crafted requests attempting to exploit this flaw.

Impact

CGIINFO header to FortiWeb’s backend CGI handler. Successful exploitation grants unintended access to restricted administrative functionality, potentially enabling configuration tampering or system-level actions.| Ruleset | Rule ID | Legacy Rule ID | Description | Previous Action | New Action | Comments |

|---|---|---|---|---|---|---|

| Cloudflare Managed Ruleset | N/A | FortiWeb - Authentication Bypass via CGIINFO Header - CVE:CVE-2025-64446 | Log | Block | This is a new detection | |

| Cloudflare Managed Ruleset | N/A | PHP Wrapper Injection - Body - Beta | Log | Disabled | This rule has been merged into the original rule "PHP Wrapper Injection - Body" (ID: | |

| Cloudflare Managed Ruleset | N/A | PHP Wrapper Injection - URI - Beta | Log | Disabled | This rule has been merged into the original rule "PHP Wrapper Injection - URI" (ID: |

Containers now support mounting R2 buckets as FUSE (Filesystem in Userspace) volumes, allowing applications to interact with R2 using standard filesystem operations.

Common use cases include:

FUSE adapters like tigrisfs ↗, s3fs ↗, and gcsfuse ↗ can be installed in your container image and configured to mount buckets at startup.

FROM alpine:3.20

# Install FUSE and dependenciesRUN apk update && \ apk add --no-cache ca-certificates fuse curl bash

# Install tigrisfsRUN ARCH=$(uname -m) && \ if [ "$ARCH" = "x86_64" ]; then ARCH="amd64"; fi && \ if [ "$ARCH" = "aarch64" ]; then ARCH="arm64"; fi && \ VERSION=$(curl -s https://api.github.com/repos/tigrisdata/tigrisfs/releases/latest | grep -o '"tag_name": "[^"]*' | cut -d'"' -f4) && \ curl -L "https://github.com/tigrisdata/tigrisfs/releases/download/${VERSION}/tigrisfs_${VERSION#v}_linux_${ARCH}.tar.gz" -o /tmp/tigrisfs.tar.gz && \ tar -xzf /tmp/tigrisfs.tar.gz -C /usr/local/bin/ && \ rm /tmp/tigrisfs.tar.gz && \ chmod +x /usr/local/bin/tigrisfs

# Create startup script that mounts bucketRUN printf '#!/bin/sh\n\ set -e\n\ mkdir -p /mnt/r2\n\ R2_ENDPOINT="https://${R2_ACCOUNT_ID}.r2.cloudflarestorage.com"\n\ /usr/local/bin/tigrisfs --endpoint "${R2_ENDPOINT}" -f "${BUCKET_NAME}" /mnt/r2 &\n\ sleep 3\n\ ls -lah /mnt/r2\n\ ' > /startup.sh && chmod +x /startup.sh

CMD ["/startup.sh"]See the Mount R2 buckets with FUSE example for a complete guide on mounting R2 buckets and/or other S3-compatible storage buckets within your containers.

Containers and Sandboxes pricing for CPU time is now based on active usage only, instead of provisioned resources.

This means that you now pay less for Containers and Sandboxes.

Imagine running the standard-2 instance type for one hour, which can use up to 1 vCPU,

but on average you use only 20% of your CPU capacity.

CPU-time is priced at $0.00002 per vCPU-second.

Previously, you would be charged for the CPU allocated to the instance multiplied by the time it was active, in this case 1 hour.

CPU cost would have been: $0.072 — 1 vCPU * 3600 seconds * $0.00002

Now, since you are only using 20% of your CPU capacity, your CPU cost is cut to 20% of the previous amount.

CPU cost is now: $0.0144 — 1 vCPU * 3600 seconds * $0.00002 * 20% utilization

This can significantly reduce costs for Containers and Sandboxes.

See the documentation to learn more about Containers, Sandboxes, and associated pricing.

The threat events platform now has threat insights available for some relevant parent events. Threat intelligence analyst users can access these insights for their threat hunting activity.

Insights are also highlighted in the Cloudflare dashboard by a small lightning icon and the insights can refer to multiple, connected events, potentially part of the same attack or campaign and associated with the same threat actor.

For more information, refer to Analyze threat events.

This week’s release introduces a critical detection for CVE-2025-61757, a vulnerability in the Oracle Identity Manager REST WebServices component.

Key Findings

This flaw allows unauthenticated attackers with network access over HTTP to fully compromise the Identity Manager, potentially leading to a complete takeover.

Impact

Oracle Identity Manager (CVE-2025-61757): Exploitation could allow an unauthenticated remote attacker to bypass security checks by sending specially crafted requests to the application's message processor. This enables the creation of arbitrary employee accounts, which can be leveraged to modify system configurations and achieve full system compromise.

| Ruleset | Rule ID | Legacy Rule ID | Description | Previous Action | New Action | Comments |

|---|---|---|---|---|---|---|

| Cloudflare Managed Ruleset | N/A | Oracle Identity Manager - Pre-Auth RCE - CVE:CVE-2025-61757 | N/A | Block | This is a new detection. |

Workers Builds now supports up to 64 environment variables, and each environment variable can be up to 5 KB in size. The previous limit was 5 KB total across all environment variables.

This change enables better support for complex build configurations, larger application settings, and more flexible CI/CD workflows.

For more details, refer to the build limits documentation.

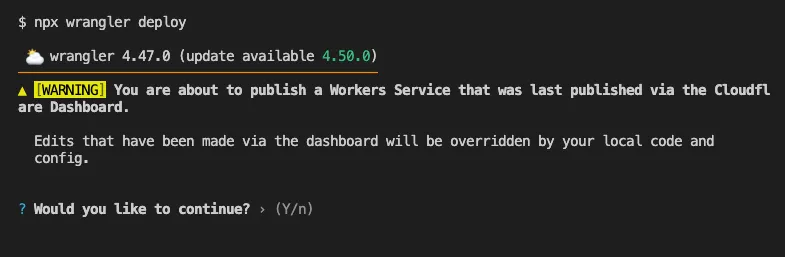

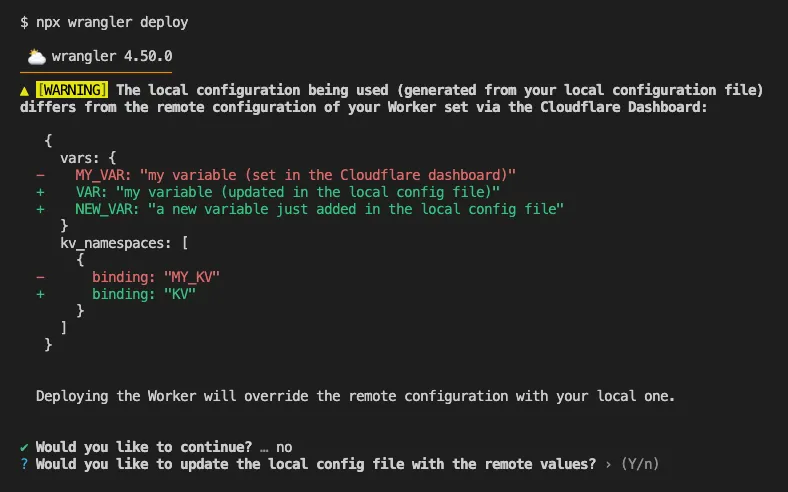

Until now, if a Worker had been previously deployed via the Cloudflare Dashboard ↗, a subsequent deployment done via the Cloudflare Workers CLI, Wrangler

(through the deploy command), would allow the user to override the Worker's dashboard settings without providing details on

what dashboard settings would be lost.

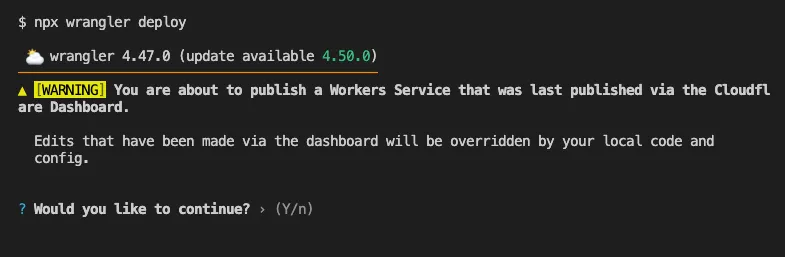

Now instead, wrangler deploy presents a helpful representation of the differences between the local configuration

and the remote dashboard settings, and offers to update your local configuration file for you.

See example below showing a before and after for wrangler deploy when a local configuration is expected to override a Worker's dashboard settings:

Before

After

Also, if instead Wrangler detects that a deployment would override remote dashboard settings but in an additive way, without modifying or removing any of them, it will simply proceed with the deployment without requesting any user interaction.

Update to Wrangler v4.50.0 or greater to take advantage of this improved deploy flow.

Earlier this year, we announced the launch of the new Terraform v5 Provider. We are aware of the high number of issues reported by the Cloudflare community related to the v5 release. We have committed to releasing improvements on a 2-3 week cadence ↗ to ensure its stability and reliability, including the v5.13 release. We have also pivoted from an issue-to-issue approach to a resource-per-resource approach ↗ - we will be focusing on specific resources to not only stabilize the resource but also ensure it is migration-friendly for those migrating from v4 to v5.

Thank you for continuing to raise issues. They make our provider stronger and help us build products that reflect your needs.

This release includes new features, new resources and data sources, bug fixes, updates to our Developer Documentation, and more.

Please be aware that there are breaking changes for the cloudflare_api_token and cloudflare_account_token resources. These changes eliminate configuration drift caused by policy ordering differences in the Cloudflare API.

For more specific information about the changes or the actions required, please see the detailed Repository changelog ↗.

We suggest holding off on migration to v5 while we work on stabilization. This help will you avoid any blocking issues while the Terraform resources are actively being stabilized. We will be releasing a new migration tool in March 2026 to help support v4 to v5 transitions for our most popular resources.

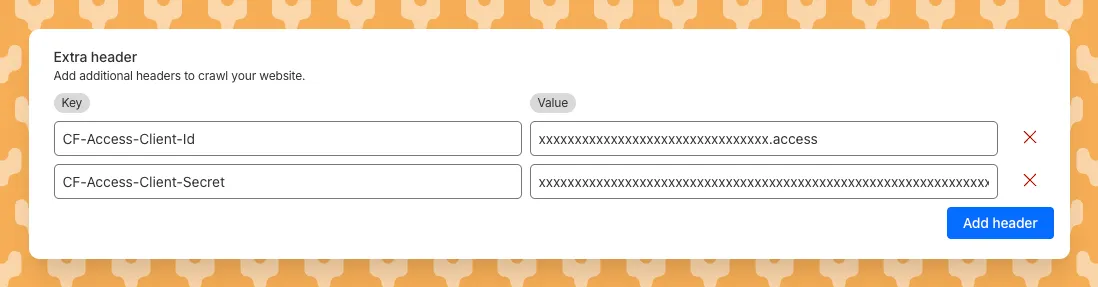

AI Search now supports custom HTTP headers for website crawling, solving a common problem where valuable content behind authentication or access controls could not be indexed.

Previously, AI Search could only crawl publicly accessible pages, leaving knowledge bases, documentation, and other protected content out of your search results. With custom headers support, you can now include authentication credentials that allow the crawler to access this protected content.

This is particularly useful for indexing content like:

To add custom headers when creating an AI Search instance, select Parse options. In the Extra headers section, you can add up to five custom headers per Website data source.

For example, to crawl a site protected by Cloudflare Access, you can add service token credentials as custom headers:

CF-Access-Client-Id: your-token-id.accessCF-Access-Client-Secret: your-token-secretThe crawler will automatically include these headers in all requests, allowing it to access protected pages that would otherwise be blocked.

Learn more about configuring custom headers for website crawling in AI Search.

We will listen carefully to your feedback and continue to find comprehensive ways to communicate updates on your submissions. Your submissions will continue to be addressed at an even greater rate than before, fuelling faster and more accurate email security improvement.